How A.I. could change the medical field

MedPaLM, a large language model like ChatGPT that is designed to respond to inquiries from a variety of medical datasets, including a brand-new one created by Google and representing inquiries from Internet users about health, was created by Google and its division DeepMind.

While responding to the HealthSearchQA questions, the MedPaLM program significantly improved, according to a group of human clinicians. The accuracy of its predictions aligned with medical consensus was 92.6%. This was only slightly lower than the average accuracy of human clinicians, which was 92.9%.

However, when a group of laypeople with medical backgrounds were asked to judge how well MedPaLM answered the issue, i.e., “Does it enable them [consumers] to draw a conclusion,” MedPaLM was deemed useful 80.3% of the time, compared to 91.1% of the time for replies from human doctors. This, in the opinion of the researchers, indicates that “considerable work remains to be done to approximate the quality of outputs provided by human clinicians”.

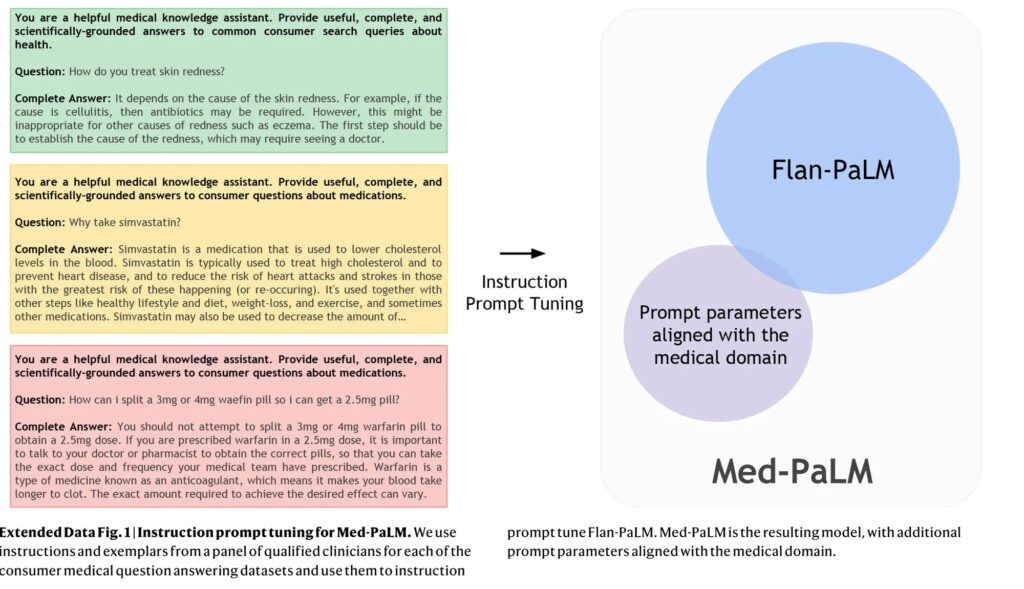

According to this article, in their study titled “Large language models encode clinical knowledge”, Karan Singhal, the paper’s lead author from Google, and their coauthors emphasize the use of rapid engineering to make MedPaLM superior to previous large language models.

The PaLM-fed question-and-answer pairs provided by five clinicians in the US and UK are the basis for MedPaLM. These 65 question-answer pairs were used to train MedPaLM using various prompt engineering techniques.

According to Singhal and team, the traditional method for improving a large language model like PaLM or OpenAI’s GPT-3 is to feed it “with large amounts of in-domain data” (data of a specific topic), but this method is difficult in this case because there is a dearth of medical data. Therefore, they instead rely on three prompting techniques.

Prompting is a technique that involves providing an AI model with a few sample inputs and outputs as demonstrations to improve its performance on a task. Researchers use three main prompting strategies:

Few-shot prompting: The task is described to the model through text examples.

Chain of thought prompting: The model is trained on a task with only a limited number of data points or examples. Self-consistency prompting: Multiple outputs are generated from the model, and the final answer is determined by a majority vote between the outputs. The key idea is that supplying an AI model with a handful of demonstration examples encoded as prompt text can enhance its capabilities on certain tasks, reducing the amount of training data needed. The prompt provides guidance to the model on how to handle new inputs.

The improved MedPaLM score demonstrates that “prompt tuning is a data-and parameter-efficient alignment technique that is useful for improving factors related to the accuracy, factuality, consistency, safety, harm, and bias, helping to close the gap with clinical experts and bring these models closer to real-world clinical applications”.

Yet, they find that “these models are not at clinician expert level on many clinically important axes”, Singhal and his team advise employing more knowledgeable human participation.

“The number of model responses evaluated and the pool of clinicians and laypeople assessing them were limited, as our results were based on only a single clinician or layperson evaluating each response”, they observe. “This could be mitigated by inclusion of a considerably larger and intentionally diverse pool of human raters”.

“Our results suggest that the strong performance in answering medical questions may be an emergent ability of LLMs combined with effective instruction prompt tuning”. Singhal and their colleagues said in their conclusion, despite MedPaLM’s shortcomings.

Clinical decision support (CDS) algorithms are an example of an artificial intelligence tool that has been integrated into clinical practice and is helping doctors make critical decisions about patient diagnosis and treatment. The ability of physicians to use these tools effectively is crucial to their effectiveness, but that ability is currently lacking.

As reported here, doctors will start to see ChatGPT and other artificial intelligence systems integrated into their clinical practice as they become more widely used to assist in the diagnosis and treatment of common medical diseases. These instruments, known as clinical decision support (CDS) algorithms, aid medical professionals in making critical choices like which antibiotics to recommend or whether to urge a dangerous heart operation.

According to a new article written by faculty at the University of Maryland School of Medicine and published in the New England Journal of Medicine, the success of these new technologies, however, depends largely on how physicians interpret and act upon a tool’s risk predictions, and that requires a specific set of skills that many are currently lacking.

The flexibility of CDS algorithms allows them to forecast a variety of outcomes, even in the face of clinical uncertainty. They range from regression-derived risk calculators to sophisticated machine learning and artificial intelligence-based systems. Such algorithms can forecast situations such as which patients are most in danger of developing life-threatening sepsis from an uncontrolled infection or which treatment will most likely stop a patient with heart disease from passing away suddenly.

“These new technologies have the potential to significantly impact patient care, but doctors need to first learn how machines think and work before they can incorporate algorithms into their medical practice”, said Daniel Morgan, MD, MS, Professor of Epidemiology and Public Health at UMSOM and co-author of the article.

While electronic medical record systems already provide certain clinical decision support features, many healthcare providers find the current software to be clunky and challenging to use. According to Katherine Goodman, J.D., Ph.D., Assistant Professor of Epidemiology & Public Health at UMSOM and co-author of the article, “Doctors don’t need to be math or computer experts, but they do need to have a baseline understanding of what an algorithm does in terms of probability and risk adjustment, but most have never been trained in those skills”.

Medical education and clinical training must explicitly cover probabilistic reasoning that is adapted to CDS algorithms in order to close this gap. At the Beth Israel Deaconess Medical Center in Boston, Drs. Morgan and Goodman made the following recommendations with Dr. Adam Rodman, MD, MPH, as their co-author:

- Improve Probabilistic Skills: Students should become familiar with the core concepts of probability and uncertainty early on in medical school. They should also use visualization methods to make probability thinking more natural.

- Incorporate Algorithmic Output into Decision-making: The critical evaluation and application of CDS predictions in clinical decision-making should be taught to physicians. Understanding the context in which algorithms work, being aware of their limitations, and taking into account pertinent patient aspects that algorithms might have overlooked.

- Practice Interpreting CDS Predictions in Applied Learning: By using algorithms on particular patients and analyzing how different inputs affect predictions, medical students and practitioners can engage in practice-based learning. Also, they ought to learn how to talk to patients about CDS-guided decision-making.

Plans for a new Institute for Health Computing (IHC) have recently been released by the University of Maryland, Baltimore (UMB), University of Maryland, College Park (UMCP), and University of Maryland Medical System (UMMS). In order to develop a world-class learning healthcare system that improves illness detection, prevention, and treatment, the UM-IHC will take advantage of recent advancements in artificial intelligence, network medicine, and other computer techniques. Dr. Goodman will start working at IHC, a facility dedicated to educating and preparing healthcare professionals for the newest technologies. In addition to the existing formal training possibilities in data sciences, the Institute intends to eventually offer certification in health data science.

“Probability and risk analysis are foundational to the practice of evidence-based medicine, so improving physicians’ probabilistic skills can provide advantages that extend beyond the use of CDS algorithms”, said UMSOM Dean Mark T. Gladwin, MD, Vice President for Medical Affairs, University of Maryland, Baltimore, and the John Z. and Akiko K. Bowers Distinguished Professor. “We’re entering a transformative era of medicine where new initiatives like our Institute for Health Computing will integrate vast troves of data into machine learning systems to personalize care for the individual patient”.

Artificial Intelligence is going to change not only the way physicians approach medicine but also how people deal with minor medical problems by themselves, perhaps reducing the burden on hospitals and doctors. Here are some important improvements:

- Early Diagnosis and Detection: AI-powered diagnostic tools can analyze medical data, such as imaging scans and lab results, with exceptional accuracy and speed. This can lead to earlier and more accurate detection of diseases, enabling prompt treatment and better outcomes.

- Personalized Treatment Plans: AI can analyze a patient’s medical history, genetic information, and other relevant data to develop personalized treatment plans. This can result in more effective treatments tailored to an individual’s unique characteristics, minimizing trial-and-error approaches.

- Enhanced Decision Support: AI can assist healthcare professionals by providing evidence-based recommendations and insights. This can help doctors make more informed decisions about diagnoses, treatment options, and medications.

- Telemedicine and Remote Monitoring: AI-powered telemedicine platforms can enable remote consultations and monitoring of patients. Wearable devices and sensors connected to AI systems can track vital signs, detect anomalies, and alert healthcare providers to potential issues.

- Drug Discovery and Development: AI algorithms can analyze vast datasets to identify potential drug candidates, predict drug interactions, and accelerate the drug discovery process. This can lead to faster development of new treatments and therapies.

- Reduced Administrative Burden: AI can automate administrative tasks, such as appointment scheduling, medical record management, and billing. This allows healthcare providers to focus more on patient care.

- Patient Education and Empowerment: AI-powered applications can provide patients with accurate and easily understandable information about their health conditions, treatment options, and preventive measures. This empowers patients to take an active role in their healthcare.

- Predictive Analytics and Population Health Management: AI can analyze data from large populations to identify trends, risk factors, and disease patterns. This information can help public health officials and healthcare providers implement targeted interventions and preventive measures.

- Enhanced Medical Imaging Analysis: AI algorithms can analyze medical images, such as X-rays, MRIs, and CT scans, to identify abnormalities and assist radiologists in making more accurate diagnoses.

- Clinical Trials and Research: AI can optimize the design of clinical trials, identify suitable participants, and analyze trial data more efficiently. This can accelerate the development of new treatments and therapies.

- Improved Patient Outcomes: Overall, the integration of medical AI can lead to faster and more accurate diagnoses, more effective treatments, reduced medical errors, and improved patient outcomes.